This page contains the mathematics that I and the rest of my class have learned over the course of our junior year. All the math concepts are listed below, as well as examples and explanations for each concept.

Probability

The dictionary definition of Probability is as follows: Probability is the likelihood of an event occurring.

This definition is not up to par when talking about math, as it doesn't refer to anything except for an event and the vague idea of chance that surrounds it. No, when talking about mathematical definitions, usually they require something more concrete and applicable. Following this notion, the definition of probability when asked by a mathematician is as follows: Probability is the number of favorable number of outcomes of the total number of outcomes of an event. In other words, the chance of the thing you want to have happen divided by all possible chances of it not happening. For example, let's say that you want to flip a coin and get heads. Well, the chance of you getting heads is put over (divided) by the total number of chances you could possibly get from a coin toss, which would be getting either heads or tails. 50/50, or one out of two. Another example would be if you wanted to roll a six-sided die, and get a prime number. The total number of prime numbers that you could get would be three (2, 3 and 5), and the chances of you not getting a prime number would be three (1, 4 and 6). It would be three out of six, three favorable outcomes over the total number of numbers you could roll, one through six.

This definition is not up to par when talking about math, as it doesn't refer to anything except for an event and the vague idea of chance that surrounds it. No, when talking about mathematical definitions, usually they require something more concrete and applicable. Following this notion, the definition of probability when asked by a mathematician is as follows: Probability is the number of favorable number of outcomes of the total number of outcomes of an event. In other words, the chance of the thing you want to have happen divided by all possible chances of it not happening. For example, let's say that you want to flip a coin and get heads. Well, the chance of you getting heads is put over (divided) by the total number of chances you could possibly get from a coin toss, which would be getting either heads or tails. 50/50, or one out of two. Another example would be if you wanted to roll a six-sided die, and get a prime number. The total number of prime numbers that you could get would be three (2, 3 and 5), and the chances of you not getting a prime number would be three (1, 4 and 6). It would be three out of six, three favorable outcomes over the total number of numbers you could roll, one through six.

Theoretical and Exponential Probability

Now, the above mentioned definitions and explanations are fine and dandy, but it only addresses part of probability's definition. Theoretical and Experimental probability are the two sides of the definition: Theoretical's definition is the one above, which is the prediction of an outcome based on the knowledge of the event prior to experimenting with it. But, as we all know, expecting an outcome and getting that same outcome are two different things. Rolling a one on a six-sided die seems simple enough, a one out of six chance, 17%. But rolling a one isn't guaranteed. You could roll the die six times and not get a single one, but instead two threes and four fives. This is where we step into the bounds of Experimental probability. Whereas Theoretical probability calculates the chance of something beforehand, Experimental deals with them afterwards. If you rolled that die a hundred times, you would notice a difference in the chances of rolling different numbers; the wouldn't be nice and neat percentages like one out of six. From those numbers is where you would make calculations for probability. Rolling a one might be a 26% chance, or a 19% chance, instead of the theoretical 17%. This is the difference between Theoretical and Experimental probability.

Fundamental Counting Principal

The Fundamental Counting Principle is an overly fancy way of describing how to calculate the total number of outcomes a single or multiple events have. For a single event, it's easy: you just count up how ever many outcomes you can get from it. Six outcomes for a die, two for a coin, etc. For two events, it gets slightly more complex: instead take the total number of outcomes from either event and multiply them together. An example would be if you were deciding what combination of clothes to wear for the day, and you selected from a pool of options. Let's say you have three pairs of pants, and four shirts, and you want to know just how many ways you can show off your fashion sense that day. You would multiply the four choices of shirts by the three choices of pants, and you would get twelve total choices of clothing options for the day. Now, in addition to your odd way of dressing yourself by multiplication and chance, you also want to pick from two pairs of socks. You would multiply the same way, with one more outcome total: 4 x 3 x 2, which would net you 24 different ways of getting dressed. Remember, while this works in a mathematical sense, it would be better to just use a decent fashion sense when picking out clothing; if you used math like this in all your daily activities, you'd walk home every day with 47 apples an 35 watermelons.

Relative Area

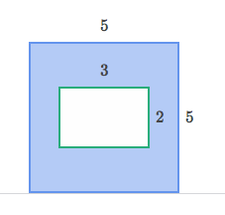

Relative Area is related to probability, even though it seems more akin to geometry. Below is a diagram and an example to better explain.

|

To the left is a diagram which I am sure you have seen before somewhere in your math career. Find the area of the shaded region and subtract the other region's area from it and such and such. For probability purposes, it's even simpler. Probability deals with chances and outcomes, and for this diagram it's no different. The only thing that's changing is how the question is framed towards the diagram. Let's say that this square isn't in fact a square, but an oddly shaped prototype dart board that never made it into mass production. The white square in the center is where you want your darts to hit, and the blue is the opposite. If you were playing darts with this board, you would want to know the chances of you hitting the white square over hitting the blue square. Well, all you would need to do is find the area of both regions of space, and put the preferred region over the total, just as probability's definition says to do. The area relative to your interest, or your chances of hitting the dartboard would be 6 out of 25, or 24%. The preferred area relative to your interest over the total area of both regions.

|

Permutations

A Permutation, a shorter word than the big title above, is the mathematical term for all the possible arrangements of a set of values where order is important. Let's say that you have three numbers: 1, 2 and 3. The permutations of those numbers are [1 2 3], [1 3 2], [3 2 1], [3 1 2], [2 3 1], and [2 1 3]. this is all of the arrangements of these values that you can have in a specific order, with no repeated order in them. A good example would be if you had to choose a new student president, vice president, and secretary from a pool of five people, and for some contrived reason had to find out how many different ways you could select those people for the positions.

Combinations

Combinations are much simpler than permutations: all combinations are just groups of values whose order doesn't matter. Permutation is the strict aunt that never lets you snack between meals on Easter brunch and dinner and always fusses over the arrangement of the egg salad and mashed potatoes on the dinner table. A Combination is the aunt that doesn't care what you eat on Easter or when, just that you're there for Easter Sunday.

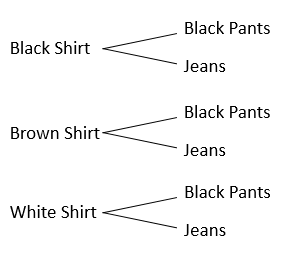

Tree Diagramming

A Tree Diagram is very simple: it is a type of chart used to represent the probability of something and the fundamental counting principal. On the left here, we see an event similar to the one listed in an above definition of the counting principle. A tree diagram lists out all possibilities that the counting principle gives you when the total outcomes for an event are calculated. From 3 shirts and 2 pairs of pants, you get 6 outcomes for clothing choice, and the end result shows you that there is a one in six chance of a specific outcome; all you need to do is follow the tree branches.

"e" and Logarithms

The first subject here, "e," is a number. I know that "e" doesn't sound like a number, but in the math world it is, and stands for "Euler's number." It is an irrational number that is used in many logarithmic calculations, and is considered important because it is a mathematical constant that is related to change, and so is used in things like calculating interest for bank loans. The video below has a good deal of knowledge on the number "e," far more than I do.

Now, logarithms are the next point of interest. A logarithm is just a way of saying "how many of one number do we multiply to get another number?" One of the best examples for this is 2^3=8, or 2 x 2 x 2 = 8. A total of three twos were used to get to eight, and that group of three twos is a logarithm. So that means that you need to multiply three two's to get to eight, and that is represented by Log2(8) = 3. "What is the logarithm of two's needed to get to eight?" is the question that the log on the left represents.

The Law of Large Numbers

This law refers to the dilemma between experimental and theoretical probability, stating that if the number of experiments is very large or even infinite, then the difference between the theoretical and experimental calculations becomes zero. If you flipped a coin four times, and you got three tails and one heads, then you might come to the conclusion that the probability for heads is not 1/2, but 1/4 instead. If you flipped that coin 10,000 times, then the total difference between the values you got would eventually reach a point to where you could say that the probability was 1/2 from your experiments.